Ruby 1.9.3 p0: Investigating weirdness with HTTP POST request in net/http

Thibaut and I spent the best part of the last couple of days trying to diagnose a problem we were having trying to make a POST request using rest-client to one of our services.

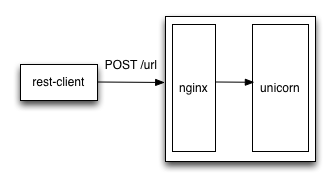

We have nginx fronting the application server so the request passes through there first:

The problem we were having was that the request was timing out on the client side before it had been processed and the request wasn’t reaching the application server.

We initially thought there might be a problem with our nginx configuration because we don’t have many POST requests with largish (40kb) payloads so we initially tried tweaking the proxy buffer size.

It was a bit of a long shot because changing that setting only reduces the likelihood that nginx writes the request body to disc and then loads it later which shouldn’t impact performance that much.

The next thing we tried was replicating the request using cURL with a smaller payload which worked fine. cURL had no problem with the bigger payload either.

We therefore thought there must be a difference in the request headers being sent by rest-client and our initial investigation suggested that it might be to do with the 'Content-Length' header.

There was a 1 byte difference in the value being sent by cURL and the one being sent by rest-client which was to do with the last character of the payload being a 0A (linefeed) character.

We changed the 'Content-Length' header on our cURL request to match that of the rest-client request (i.e. 1 byte too large) and were able to replicate the timeout problem.

At this stage we thought that calling 'strip' on the body of our rest-client request would solve the problem as the 'Content-Length' header would now be set to the correct value. It did set the 'Content-Length' header properly but unfortunately didn’t get rid of the timeout.

Our next step was to check whether or not we could get any request to work from rest-client so we tried using a smaller payload which worked fine.

At this stage Jason heard us discussing what to do next and said that he’d come across it earlier and that upgrading our Ruby Version from '1.9.3p0' would solve all our woes.

That Ruby version is a couple of years old and most of our servers are running '1.9.3p392' but somehow this one had slipped through the net.

We spun up a new server with that version of Ruby installed and it did indeed fix the problem.

However, we were curious what the fix was and had a look at the change log of the first patch release after '1.9.3p0'. We noticed the following which seemed relevant:

Tue May 31 17:03:24 2011 Hiroshi Nakamura <nahi@ruby-lang.org> * lib/net/http.rb, lib/net/protocol.rb: Allow to configure to wait server returning '100 continue' response before sending HTTP request body. See NEWS for more detail. See #3622. Original patch is made by Eric Hodel <drbrain@segment7.net>. * test/net/http/test_http.rb: test it. * NEWS: Add new feature.

One thing we noticed from looking at the requests with ngrep was that cURL was setting the 100 Continue Expect request header and rest-client wasn’t.

When the payload size was small nginx didn’t seem to send a '100 Continue' response which was presumably why we weren’t seeing a problem with the small payloads.

I wasn’t sure how to go about finding out exactly what was going wrong but given how long it took us to get to this point I thought I’d summarise what we tried and see if anyone could explain it to me.

So if you’ve come across this problem (probably 2 years ago!) it’d be cool to know exactly what the problem was.

About the author

I'm currently working on short form content at ClickHouse. I publish short 5 minute videos showing how to solve data problems on YouTube @LearnDataWithMark. I previously worked on graph analytics at Neo4j, where I also co-authored the O'Reilly Graph Algorithms Book with Amy Hodler.