A new found respect for acceptance tests

On the project that I’ve been working on over the past few months one of the key benefits of the application was its ability to perform various calculations based on user input.

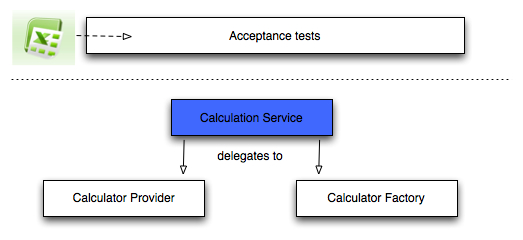

In order to check that these calculators are producing the correct outputs we created a series of acceptance tests that ran directly against one of the objects in the system.

We did this by defining the inputs and expected outputs for each scenario in an excel spreadsheet which we converted into a CSV file before reading that into an NUnit test.

It looked roughly like this:

We found that testing the calculations like this gave us a quicker feedback cycle than testing them from UI tests both in terms of the time taken to run the tests and the fact that we were able to narrow in on problematic areas of the code more quickly.

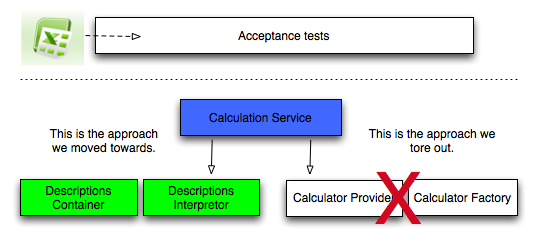

As I mentioned on a previous post we’ve been trying to move the creation of the calculators away from the 'CalculatorProvider' and 'CalculatorFactory' so that they’re all created in one place based on a DSL which describes the data required to initialise a calculator.

In order to introduce this DSL into the code base these acceptance tests acted as our safety net as we pulled out the existing code and replaced it with the new DSL.

We had to completely rewrite the 'CalculationService' unit tests so those unit tests didn’t provide us much protection while we made the changes I described above.

The acceptance tests, on the other hand, were invaluable and saved us from incorrectly changing the code even when we were certain we’d taken such small steps along the way that we couldn’t possibly have made a mistake.

This is certainly an approach I’d use again in a similar situation although it could probably be improved my removing the step where we convert the data from the spreadsheet to CSV file.

About the author

I'm currently working on short form content at ClickHouse. I publish short 5 minute videos showing how to solve data problems on YouTube @LearnDataWithMark. I previously worked on graph analytics at Neo4j, where I also co-authored the O'Reilly Graph Algorithms Book with Amy Hodler.