Python/scikit-learn: Detecting which sentences in a transcript contain a speaker

Over the past couple of months I’ve been playing around with How I met your mother transcripts and the most recent thing I’ve been working on is how to extract the speaker for a particular sentence.

This initially seemed like a really simple problem as most of the initial sentences I looked at weere structured like this:

<speaker>: <sentence>If there were all in that format then we could write a simple regular expression and then move on but unfortunately they aren’t. We could probably write a more complex regex to pull out the speaker but I thought it’d be fun to see if I could train a model to work it out instead.

The approach I’ve taken is derived from an example in the NLTK book.

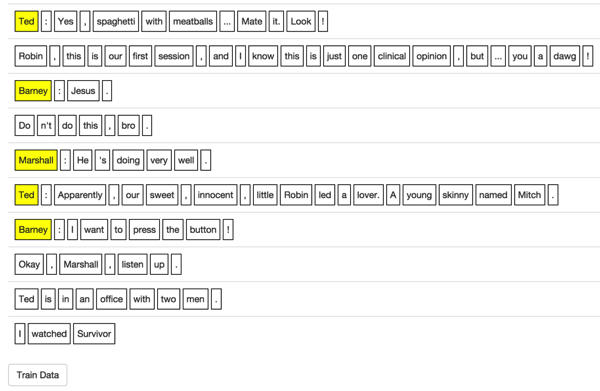

The first problem with this approach was that I didn’t have any labelled data to work with so I wrote a little web application that made it easy for me to train chunks of sentences at a time:

I stored the trained words in a JSON file. Each entry looks like this:

import json

with open("data/import/trained_sentences.json", "r") as json_file:

json_data = json.load(json_file)

>>> json_data[0]

{u'words': [{u'word': u'You', u'speaker': False}, {u'word': u'ca', u'speaker': False}, {u'word': u"n't", u'speaker': False}, {u'word': u'be', u'speaker': False}, {u'word': u'friends', u'speaker': False}, {u'word': u'with', u'speaker': False}, {u'word': u'Robin', u'speaker': False}, {u'word': u'.', u'speaker': False}]}

>>> json_data[1]

{u'words': [{u'word': u'Robin', u'speaker': True}, {u'word': u':', u'speaker': False}, {u'word': u'Well', u'speaker': False}, {u'word': u'...', u'speaker': False}, {u'word': u'it', u'speaker': False}, {u'word': u"'s", u'speaker': False}, {u'word': u'a', u'speaker': False}, {u'word': u'bit', u'speaker': False}, {u'word': u'early', u'speaker': False}, {u'word': u'...', u'speaker': False}, {u'word': u'but', u'speaker': False}, {u'word': u'...', u'speaker': False}, {u'word': u'of', u'speaker': False}, {u'word': u'course', u'speaker': False}, {u'word': u',', u'speaker': False}, {u'word': u'I', u'speaker': False}, {u'word': u'might', u'speaker': False}, {u'word': u'consider', u'speaker': False}, {u'word': u'...', u'speaker': False}, {u'word': u'I', u'speaker': False}, {u'word': u'moved', u'speaker': False}, {u'word': u'here', u'speaker': False}, {u'word': u',', u'speaker': False}, {u'word': u'let', u'speaker': False}, {u'word': u'me', u'speaker': False}, {u'word': u'think', u'speaker': False}, {u'word': u'.', u'speaker': False}]}Each word in the sentence is represented by a JSON object which also indicates if that word was a speaker in the sentence.

Feature selection

Now that I’ve got some trained data to work with I needed to choose which features I’d use to train my model.

One of the most obvious indicators that a word is the speaker in the sentence is that the next word is ':' so 'next word' can be a feature. I also went with 'previous word' and the word itself for my first cut.

This is the function I wrote to convert a word in a sentence into a set of features:

def pos_features(sentence, i):

features = {}

features["word"] = sentence[i]

if i == 0:

features["prev-word"] = "<START>"

else:

features["prev-word"] = sentence[i-1]

if i == len(sentence) - 1:

features["next-word"] = "<END>"

else:

features["next-word"] = sentence[i+1]

return featuresLet’s try a couple of examples:

import nltk

>>> pos_features(nltk.word_tokenize("Robin: Hi Ted, how are you?"), 0)

{'prev-word': '<START>', 'word': 'Robin', 'next-word': ':'}

>>> pos_features(nltk.word_tokenize("Robin: Hi Ted, how are you?"), 5)

{'prev-word': ',', 'word': 'how', 'next-word': 'are'}Now let’s run that function over our full set of labelled data:

with open("data/import/trained_sentences.json", "r") as json_file:

json_data = json.load(json_file)

tagged_sents = []

for sentence in json_data:

tagged_sents.append([(word["word"], word["speaker"]) for word in sentence["words"]])

featuresets = []

for tagged_sent in tagged_sents:

untagged_sent = nltk.tag.untag(tagged_sent)

for i, (word, tag) in enumerate(tagged_sent):

featuresets.append( (pos_features(untagged_sent, i), tag) )Here’s a sample of the contents of featuresets:

>>> featuresets[:5]

[({'prev-word': '<START>', 'word': u'You', 'next-word': u'ca'}, False), ({'prev-word': u'You', 'word': u'ca', 'next-word': u"n't"}, False), ({'prev-word': u'ca', 'word': u"n't", 'next-word': u'be'}, False), ({'prev-word': u"n't", 'word': u'be', 'next-word': u'friends'}, False), ({'prev-word': u'be', 'word': u'friends', 'next-word': u'with'}, False)]It’s nearly time to train our model, but first we need to split out labelled data into training and test sets so we can see how well our model performs on data it hasn’t seen before. sci-kit learn has a function that does this for us:

from sklearn.cross_validation import train_test_split

train_data,test_data = train_test_split(featuresets, test_size=0.20, train_size=0.80)

>>> len(train_data)

9480

>>> len(test_data)

2370Now let’s train our model. I decided to try out Naive Bayes and Decision tree models to see how they got on:

>>> classifier = nltk.NaiveBayesClassifier.train(train_data)

>>> print nltk.classify.accuracy(classifier, test_data)

0.977215189873

>>> classifier = nltk.DecisionTreeClassifier.train(train_data)

>>> print nltk.classify.accuracy(classifier, test_data)

0.997046413502It looks like both are doing a good job here with the decision tree doing slightly better. One thing to keep in mind is that most of the sentences we’ve trained at in the form '

If we explore the internals of the decision tree we’ll see that it’s massively overfitting which makes sense given our small training data set and the repetitiveness of the data: ~python >>> print(classifier.pseudocode(depth=2)) if next-word == u'!': return False if next-word == u'$': return False ... if next-word == u"'s": return False if next-word == u"'ve": return False if next-word == u'(': if word == u'!': return False ... if next-word == u'': return False if next-word == u'': return False if next-word == u',': if word == u"''": return False ... if next-word == u'--': return False if next-word == u'.': return False if next-word == u'...': ... if word == u’who': return False if word == u’you': return False if next-word == u'/i': return False if next-word == u'1': return True ... if next-word == u':': if prev-word == u"'s": return True if prev-word == u',': return False if prev-word == u'...': return False if prev-word == u'2030': return True if prev-word == '

One update I may make to the features is to include the part of speech of the word rather than its actual value to see if that makes the model a bit more general. Another option is to train a bunch of decision trees against a subset of the data and build an ensemble/random forest of those trees.

Once I’ve got a working 'speaker detector' I want to then go and work out who the likely speaker is for the sentences which don’t contain a speaker. The plan is to calculate the word distributions of the speakers from sentences I do have and then calculate the probability that they spoke the unlabelled sentences.

This might not work perfectly as there could be new characters in those episodes but hopefully we can come up with something decent.

The full code for this example is on github if you want to have a play with it.

Any suggestions for improvements are always welcome in the comments.

About the author

I'm currently working on short form content at ClickHouse. I publish short 5 minute videos showing how to solve data problems on YouTube @LearnDataWithMark. I previously worked on graph analytics at Neo4j, where I also co-authored the O'Reilly Graph Algorithms Book with Amy Hodler.